How Do LLM Citations Work? The Ultimate Guide to AI Visibility (2026)

Your customers are already asking AI what to buy. Are you showing up when they do?

71% of consumers already want AI built into their shopping experience (Capgemini). That’s not a future preference, it’s a current expectation most brands aren’t meeting yet.

Here’s the problem, though.

Most eCommerce brands have no idea whether they’re actually appearing in those LLM citations or getting passed over entirely. If AI search tools aren’t pulling from your content, you’re leaving behind traffic that converts at significantly higher rates than traditional organic search.

This is not only just another digital marketing shift. It’s a structural change in how your audience discovers and evaluates information.

It’s also rewriting the rules for how eCommerce stores get discovered, compared to everything brands have spent years optimizing for. If you want to go deeper on that shift, our guide on eCommerce AI SEO covers the ranking strategies in full.

In this post, you’ll learn:

- How AI models decide which sources to cite and why most content gets ignored.

- The content and technical signals that make a source citation-worthy.

- How ChatGPT, Perplexity, and Google AI Overviews handle citations differently.

- A clear way to measure and grow your AI visibility over time.

Key Takeaways

- LLM citations bring higher-converting traffic than traditional search.

- RAG drives most citations, so content freshness and structure are non-negotiable.

- Query fan-out turns one search into multiple citation opportunities.

- Topical authority, clean formatting, and original data are your biggest citation levers.

- Every AI search tool picks sources differently. One strategy won’t work on every AI model.

What are LLM Citations?

When you ask an AI assistant something, and it points to a specific source, either by naming it or linking to it, that’s a citation. It’s the AI’s way of telling you where the information originated.

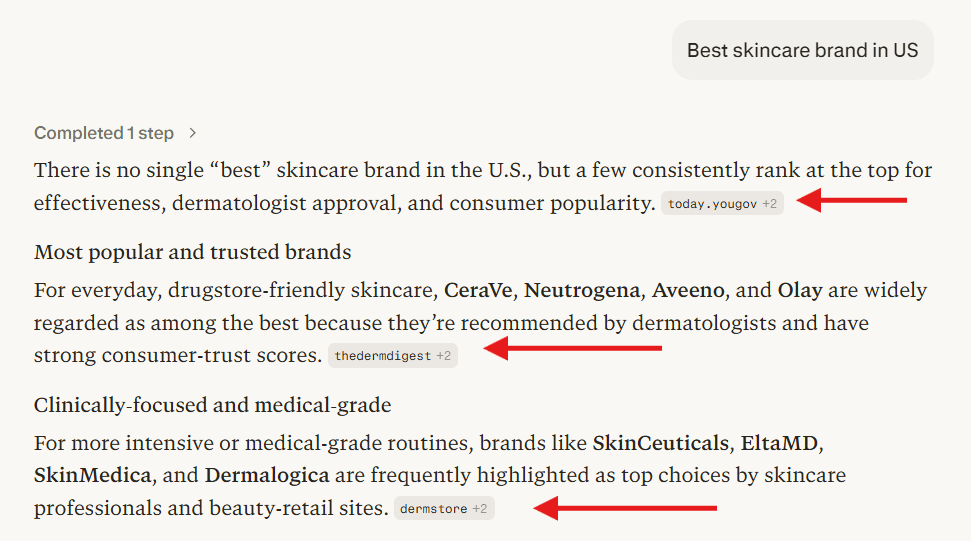

Let’s understand it with a simple example. I asked Perplexity, “Best skincare brand in US” and see how it responds.

AI mentions are direct references to a brand or entity within a response, without any hyperlink attached. They still contribute to brand awareness and visibility, just without the clickable element.

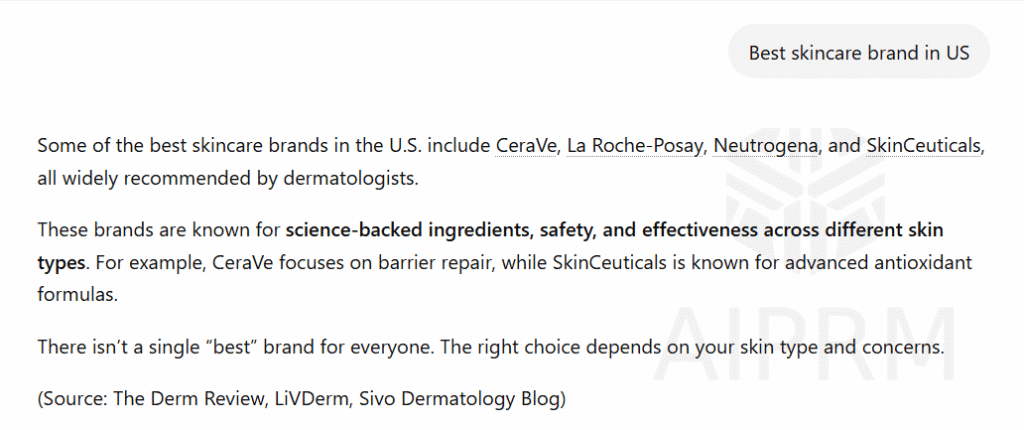

To see this in action, I asked ChatGPT the same question: “Best skincare brand in US” It returns a solid answer and names the source it pulled from, but there won’t be a link you can click through.

This is where AI citations carry more weight. A cited source gets the click, which drives traffic and builds authority. The mentioned source builds brand recognition and trust, but without a direct referral.

It may seem like a small difference at first glance, but it matters more than most people realize.

How AI Systems Actually Retrieve and Cite Content

If you want to optimize for AI citations, the first step is understanding how LLMs actually pull in information. There are two different mechanisms at play, and they matter a lot.

Two Ways AI Pulls Information to Answer Questions

Training data is essentially the model’s long-term memory. It’s everything the model absorbed before it was ever deployed. The problem is that it’s slow to update, and you have no real say in what gets included. If your site wasn’t already established a few years back, there’s a reasonable chance it never made it in.

Retrieval-augmented generation (RAG) is the real-time layer. Rather than relying solely on pre-existing knowledge, the model actively searches the web during a query and pulls in relevant pages to construct its response. This live retrieval is currently the most viable path to earning a citation.

By 2026, this has evolved further. We’re now seeing Agentic RAG, where AI tools go beyond running a single search. The behavior is closer to a human researcher: cross-referencing multiple sources, fact-checking claims, and validating information before presenting it to the user.

When AI Systems Search the Web

Not every search triggers real-time retrieval. But certain query types almost always do.

When someone asks about something that shifts frequently, like breaking news or recent product releases, the AI doesn’t fall back on its training data. It goes and fetches current information. The same applies to health, finance, legal, and safety topics, because the cost of being wrong is simply too high. The model looks for a source it can actually cite.

Data and statistics work the same way. Ask for a number, and the AI will almost always go to the live web to pull it from a source. Niche topics follow the same logic. When a subject wasn’t covered thoroughly in training data, the model defaults to searching outward because it lacks enough stored knowledge to answer with confidence.

If your content falls into any of these categories, you’re already well-positioned to get cited by an LLM.

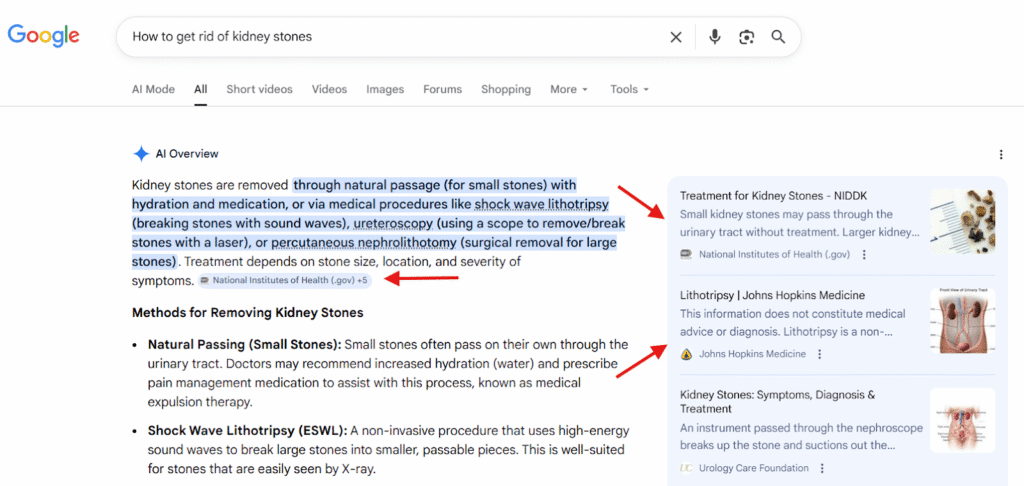

Here’s a quick real-world example: I ran a health-related query on Google. Google’s AI Overviews returned an answer with citations attached, so I could verify the source directly.

How One Question Becomes Many

Most people think AI search tools process a question the same way a human would. That’s not really how it works. When someone enters a query, AI tools often break it down into several smaller sub-queries running simultaneously in the background. This is called query fan-out, and it remains one of the most overlooked concepts in AI visibility strategy.

For example, if someone searches “What skincare ingredients should I avoid during pregnancy?” The AI isn’t just processing that one question. It’s simultaneously generating sub-queries like “unsafe skincare ingredients during pregnancy,” “retinol safety during pregnancy 2026,” and “dermatologist-approved pregnancy skincare routine” all at the same time.

Each of those sub-queries is its own ranking opportunity, and most of them go unclaimed.

This should change how you approach content structure. If your page only addresses the main question while ignoring everything that branches from it, you’re leaving the majority of those opportunities on the table. Build your content around complete topics, not just the primary keyword. That way, you increase your chances of appearing across the full fan-out.

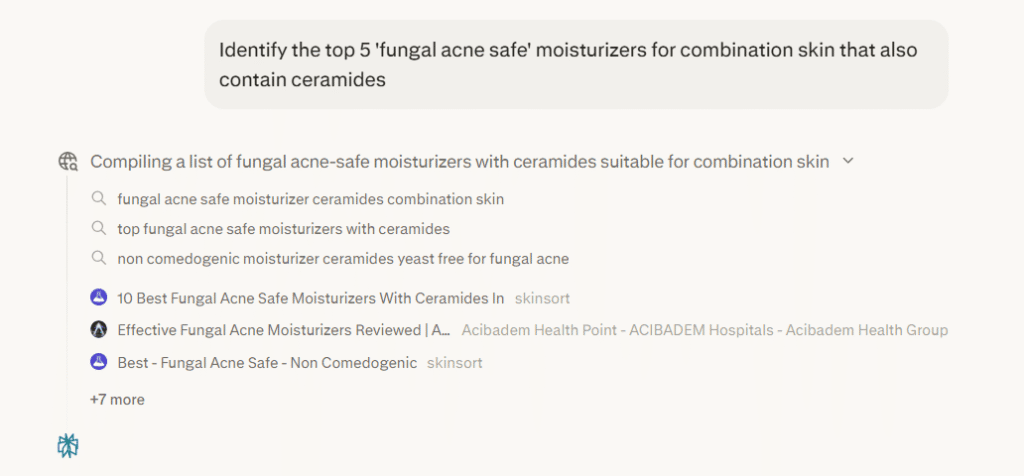

Here’s a practical example. I ran a query around “top 5 fungal acne safe moisturizers”.

See the ‘Thinking’ dropdown?

One question, and the AI immediately expanded it into six targeted searches to pull the most accurate and structured data. To earn citations from LLMs, your content needs to be the strongest answer for those specific sub-queries.

What Actually Determines Whether AI Cites Your Content

These are the real ranking factors behind LLM citations. Get these right, and the rest becomes much more manageable.

1. Content Freshness is a Core Citation Signal

AI tools carry a strong recency bias. Newer models are being trained to detect manipulation, so if a piece of content carries a 2026 date but the underlying facts align with 2021 training data, the model treats that as a red flag.

The actual factual claims in your content, what researchers call “Subject-Relation-Object” triplets, need to reflect genuine updates, not just a refreshed timestamp. Content that hasn’t been meaningfully changed in a while is practically invisible to these systems.

Simply swapping out a year in the headline won’t cut it. AI models can identify when a so-called update is purely cosmetic. Changing “2025” to “2026” without touching anything else doesn’t read as fresh.

What actually signals recency is updated data, revised language, and added context. High-traffic pages should get a proper review at least once a quarter, and anything data-heavy needs even more attention than that.

2. Domain Authority in AI Search

High-authority domains, think DR 80 and above, continue to get referenced by LLMs regularly. They’re essentially a reliable default for AI systems. But the more notable shift in 2026 is how topical authority is gaining ground.

A niche site focused entirely on industrial drone repair can realistically outrank a large general tech publication for those specific Large Language Model citations.

AI models are increasingly recognizing that a specialized, focused source tends to be more accurate and trustworthy than a broad platform covering everything at a surface level. For smaller publishers, depth of focus is becoming a genuine competitive edge.

3. Semantic Relevance Over Keyword Matching

AI doesn’t measure relevance the way keyword-based search engines do. It isn’t looking for exact term matches on your page. The core question it’s asking is much simpler: does this content actually address what the user wants to know?

A focused page that directly answers one question will often outperform a longer, more comprehensive page that approaches the topic from multiple angles. When it comes to relevance signals for AI search, depth consistently beats breadth.

The simplest way to benchmark this?

Look at what sources are already being cited in AI Overviews and other AI-generated responses for your target queries. Pay attention to how specific those sources are. That’s the standard you’re working toward.

4. Clean Formatting Gets You Cited More Often

AI models don’t consume content the way humans do. They scan for specific chunks they can pull cleanly and credit accurately. If your answer only makes sense after reading several paragraphs, the model will likely move past it.

Clean formatting comes down to making your content easy to extract. That means keeping paragraphs short and focused. Use question-based headings that reflect how people actually search. Write answers that stand on their own, without depending on pronouns or context from elsewhere on the page. Avoid dense blocks of text where the actual answer is buried somewhere in the middle.

Microsoft’s guidance on structuring content for AI consumption makes this clear: the easier it is to pull a direct answer from your page, the more likely that answer appears in an AI-generated response.

Pro Tip: Not sure how your content is performing across AI platforms right now? Track My Visibility’s free mini report gives you a quick snapshot of where your brand is being cited, which platforms are picking you up, and where you’re getting skipped entirely.

8 Tactics to Earn More LLM Citations

Once you understand what AI search tools look for when selecting content. The next step is knowing which strategies actually help your brand earn more AI references. Here are the 8 most effective ones.

1. Start With a Citation Audit

Before creating anything new, figure out what’s already getting LLM citations in your space. Use ChatGPT, Perplexity, Claude, Gemini, and Google’s AI Overviews, and ask the same questions your customers are typing in.

Look closely at what surfaces. Which sources are being cited? How frequently? For what types of questions? Manual testing takes effort, but it gives you the most accurate picture of what’s genuinely working.

This frustration is more common than eCommerce brands expect.

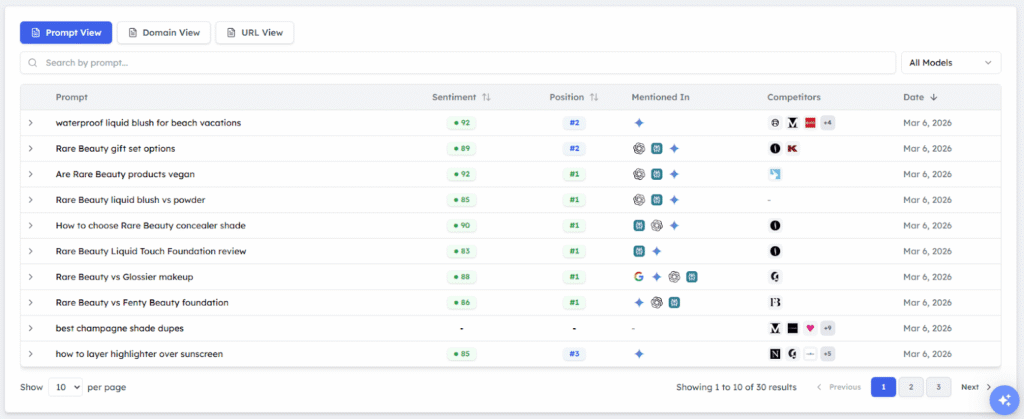

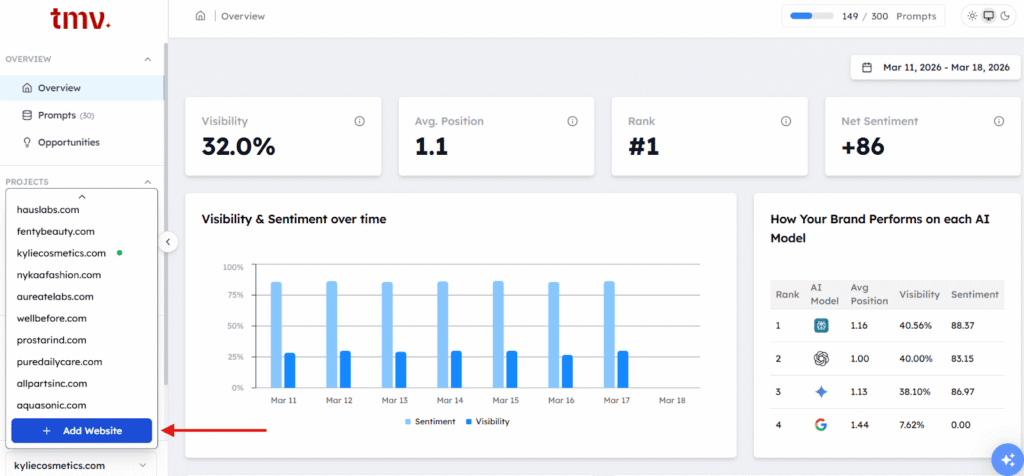

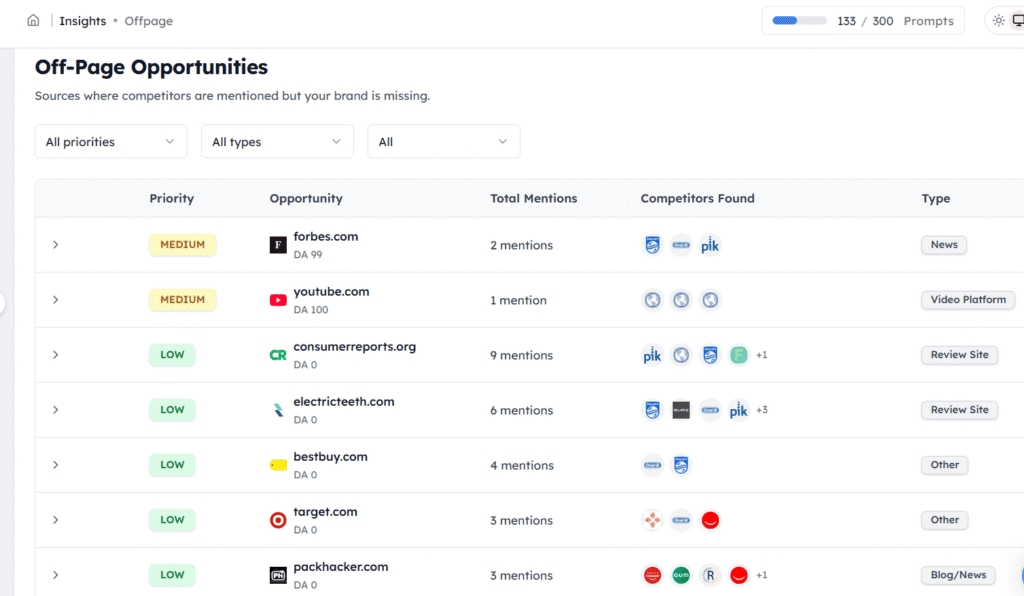

To do this at scale, a tool like Track My Visibility is worth considering since it’s built to monitor how your brand and competitors show up across multiple AI platforms.

Focus on three things: which competitors appear most consistently as cited sources, what format their content is in when it gets pulled into an answer, and whether there are query types where no one is being cited. That last point is your biggest opening. It signals a gap the AI is trying to fill, and you can position your content to fill it.

Once you complete this audit, you have your baseline. Every piece of content you create or optimize going forward should be measured against what you found.

Pro Tip: Manual testing without a structured framework means you’ll likely audit the wrong signals entirely. The All-in-One AEO & GEO Audit Checklist tells you exactly what to look for across every AI platform, so no citation opportunity slips through the cracks.

2. Identify and Close Citation Gaps

A citation gap is when a competitor is getting cited by AI tools for a topic, and you aren’t. These should be your priority, because the validation already exists. Someone has confirmed that AI systems are pulling sources for those queries. Your job is simply to earn that position.

Begin by running competitor URLs through the Track My Visibility tool. Then go deeper. Pull from your own customer reviews, post-purchase surveys, and live chat logs to surface questions that come up repeatedly.

Browse Reddit threads, niche forums, and Q&A platforms for questions in your space that still lack a clear, reliable answer. Autocomplete suggestions and “People Also Ask” boxes are also worth checking to get a full picture of what your audience is actually searching for.

Once you spot a gap, don’t just copy what’s already being cited. Write a sharper, more complete, self-contained answer than what currently exists. That’s what gets you the spot.

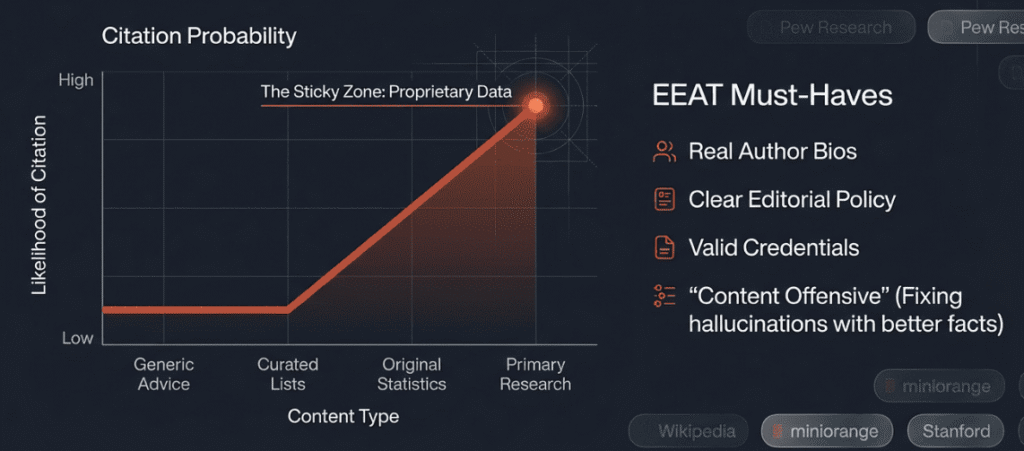

3. Produce and Publish Original Research Data

Original research is one of the most dependable ways to get cited by LLMs. When a model encounters a question that involves statistics or data points, it actively looks for sources to reference.

That’s what makes original data so effective. A vague, unsupported claim gets passed over. A specific, sourced number gets picked up.

You don’t need a dedicated research team to pull this off. Surveys with even 50 to 100 respondents can do the job, as long as the methodology is transparent. Internal usage data, aggregated insights from your customers, and benchmarks generated by your own tools all qualify as original data.

Presentation matters just as much as the data itself. Lead with the number, briefly note where it came from, and keep the context tight so the stat holds up on its own. Also, if a key finding only appears inside a table, write it out as a plain sentence too. AI systems pull text far more reliably than table cells.

4. Optimize for EEAT (Experience, Expertise, Authority, and Trustworthiness)

EEAT is no longer just a Google ranking factor. AI models now use these same signals to evaluate whether your content is worth citing.

The indicators are fairly clear: author bios with verifiable credentials, transparent editorial standards, named contributors on research content, and links from reputable sources. These all signal to AI systems that a real, knowledgeable person is behind the content.

If your page could have been written by anyone, it likely won’t be treated as a credible source. Put a name to it, back it with credentials, and leave a paper trail.

5. Keep your Content Update Cycle Consistent

Keeping content fresh isn’t a one-time task. It has to be baked into how your editorial calendar works, not something your content team scrambles to do once a year.

How often you revisit content depends on what kind it is. Pages built around data and statistics need a look every three to six months, or sooner if the numbers shift. Evergreen how-to guides can hold up for about a year between reviews.

Product comparisons and tool roundups age faster, so those deserve a quarterly check. For trend and industry content, update it as the landscape changes.

It works as a clear trust signal for both AI-driven search and organic search results. Keep it visible, not buried in the footer.

6. Structure Content for Easy AI Extraction

This is likely the highest-impact adjustment you can make today.

Answer capsules are brief, self-contained responses placed directly after a question-style heading. If you want a deeper breakdown, our guide on optimizing content for AI answers walks through the full method.

Here’s what that looks like in practice:

Heading: Which skincare ingredients should you avoid during pregnancy?

Answer capsule: Retinol, salicylic acid in high concentrations, and chemical sunscreens are generally considered unsafe during pregnancy and should be avoided.

After the capsule, you follow up with supporting detail and context.

One rule to stick to: Keep hyperlinks out of the capsule itself.

Place your links in the body content below the capsule, not inside it. Always lead with the answer. Don’t make the reader, or the AI, dig through background information just to get to the point.

7. Build Citation Presence on Your Own Site

Relying only on your website won’t get you cited across every AI platform. Perplexity, for instance, pulls heavily from user-generated content on Reddit, Quora, and LinkedIn. That means your AI visibility strategy needs to work off your domain as well.

You’ll want to join Reddit discussions, post long-form content on LinkedIn, answer questions on Quora, and build relationships with journalists over time.

Once you’re doing this regularly, tracking what’s actually working becomes important. A tool like Track My Visibility can help here. It shows which platforms are picking up your content and where AI tools are already referencing you, so you can focus on what’s gaining traction rather than guessing.

When several credible platforms point back to the same data from you, AI tools are much more likely to treat you as the source.

8. Implement Technical Signals

Technical optimization for AI search follows a lot of the same principles as traditional SEO, but a few things matter specifically for citations.

Schema markup like article, FAQ, organization, and product schemas, helps AI systems understand your content properly. FAQ schema works particularly well because it matches the question-and-answer format that AI models naturally prefer. Running your pages through Google’s Rich Results Test from time to time is good practice since broken markup can silently hurt your citation rate.

LLMs.txt is another addition worth making. Think of it as robots.txt but built for AI crawlers. Adoption is still limited, but getting ahead of it now makes sense.

For local brands, keeping your NAP (Name, Address, Phone) consistent across all platforms is essential. Page speed also matters more than people realize. If your site loads slowly, AI systems may time out before they can pull your answer at all.

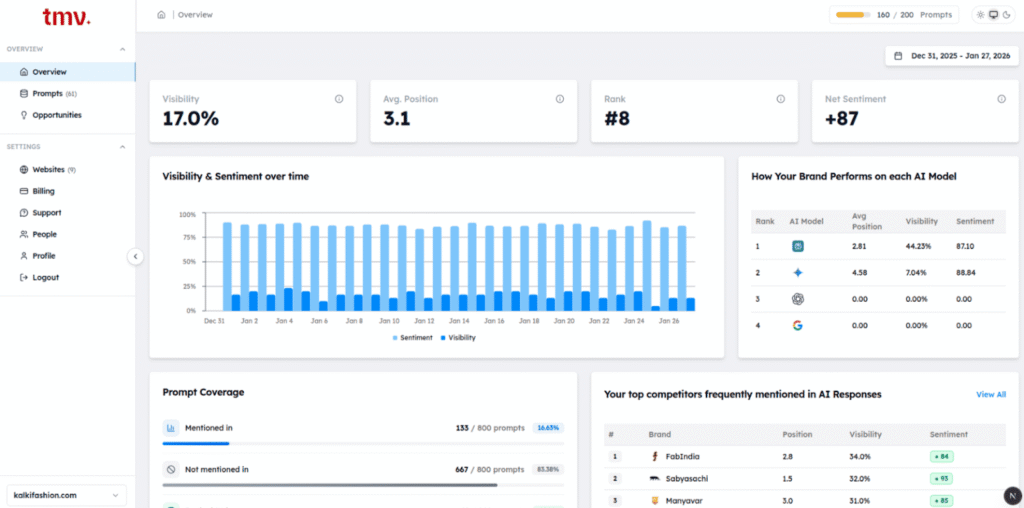

Track My Visibility gives you a clear picture of where you stand. You can see which AI models are citing you, which prompts your competitors are winning that you aren’t, and where your citation quality has gaps. That information tells you exactly where to focus your technical and content work, rather than optimizing without direction.

Platform-by-Platform Breakdown of AI Citation Preferences

The universal strategies covered earlier work across all platforms. That said, each AI system has its own preferences when it comes to picking sources.

| Platform | What it prefers | How to win visibility | Best citation sources | Best content format |

| ChatGPT (OpenAI) | High-authority sites and pages ranking in search | Build authority and earn major media coverage | Editorial media, industry publications | In-depth guides, data-backed explainers |

| Perplexity (Perplexity AI) | Community content from Reddit, Quora, LinkedIn | Prioritize off-site and community content | Reddit, Quora, LinkedIn | Q&A posts, expert opinions |

| Google AI Overviews (Google) | Your own high-ranking indexed pages | Improve SEO, EEAT, schema, and page quality | First-party site content | Structured evergreen pages |

| Claude (Anthropic) | Well-sourced, transparent reasoning | Publish citation-ready, sourced content | Community content from Reddit, Quora, and LinkedIn | Methodical explainers |

| Gemini (Google) | Expert, well-cited content | Create precise reference content | First-party experts, trusted sources | Technical deep dives |

Why LLM Citations Matter for eCommerce Brands

To truly grasp the significance of LLM citations, the numbers tell the story best.

Adobe’s 2025 holiday season report revealed that retail AI-driven traffic surged 693% year-over-year, with travel, financial services, and tech sectors all recording strong gains. What makes this even more compelling is that traffic arriving through AI answers tends to convert at notably higher rates than average.

A Reddit user shared that after optimizing for AI visibility, AI became their third-largest traffic source and noted the traffic felt more qualified than traditional SEO traffic.

How to get your product recommended by AI — here’s what actually worked for me

by u/sunfe2009 in indiehackers

Visitors who land on your site via an AI citation are already pre-qualified. The AI has essentially vouched for your credibility before they even arrive.

For eCommerce brands, the opportunity to establish AI visibility before the space gets competitive is still very much alive. Most eCommerce brands haven’t moved on to this yet. Those who build citation authority early will find it far more difficult to displace once the landscape shifts.

That said, it’s worth grounding expectations in reality.

As Rand Fishkin put it, ‘Citations are correlated, but not causal with brand appearances in the results.’

Citations carry real weight as a signal, but they’re one piece of a larger visibility picture. The actual goal is consistent presence across AI platforms, not simply chasing citation counts.

The connection to traditional search is also worth noting. Nearly everything that improves your chances of being cited by LLMs, including original research, EEAT signals, clean formatting, and topical authority, also supports higher rankings in conventional search.

This is the foundation behind AEO and GEO, and understanding how they connect to SEO makes the overall strategy much easier to act on.

How to Track and Monitor your LLM Citation Performance

You can’t improve what you’re not measuring. If you want to approach this properly, gut feelings won’t cut it. The right place to start is understanding how to monitor your AI search visibility across the platforms that actually matter to your business.

Method 1: Building a Free Manual Testing Routine

The simplest approach is often the most revealing. Choose 20 to 30 specific questions your customers regularly ask, then run them through ChatGPT, Perplexity, Claude, Google AI Overview, and Gemini on a monthly basis.

Don’t stop at looking for your brand name. Document everything, including direct links, unlinked mentions, competitor appearances, and ghost citations.

Method 2: Using GA4 and Dedicated Tools for Citation Tracking

In GA4, AI referral traffic tends to appear under direct traffic or with referrer strings tied to specific platforms. Create segments to isolate traffic from known AI sources like perplexity.ai and chat.openai.com, then track those conversion rates separately.

The attribution picture is never perfect since some AI traffic shows up with no referrer at all. That said, even incomplete data gives you a clearer sense of which content is actually producing results.

Tools like Track My Visibility are designed specifically to track brand presence across AI platforms. If AI visibility matters to your business, investing in at least one dedicated monitoring tool is a reasonable step.

How to Fix Negative Citations about your Brand

AI systems aren’t perfect. They sometimes pull outdated pricing, discontinued features, or old brand names, and there’s no way to dispute that with a chatbot formally.

The most practical fix is a content-first approach. If a model keeps surfacing incorrect information about your brand, track down the page it’s likely pulling from, update it, and make the accurate details as easy to extract as possible. Use answer capsules, lead with the correct fact, and include a clear “last updated” date.

AI models gravitate toward the freshest, most structured source available. If your updated page is easier to read and parse than the outdated one, the model will gradually shift its citations toward it. You’re not battling the algorithm. You’re just making the right answer more accessible than the wrong one.

Why your Content Isn’t Getting Cited by AI Models and How to Fix it

Even well-intentioned efforts can fall flat if you’re still relying on outdated SEO strategies. These are the most common ways ecommerce brands unknowingly reduce their AI visibility.

1. Hyperlinks Hurt Citation Chances

Many brands assume that linking within their best answers signals higher value. In reality, AI models are less likely to pull from and cite capsules that include hyperlinks. The link introduces ambiguity around attribution, so the AI tends to skip that capsule altogether.

The fix is simple: place your links below the capsule, within the supporting body content. Keep the capsule itself clean and free of links.

2. Buried Answers Lose Citations

If your content opens with two or three paragraphs of context before reaching the actual point, you’re writing for an older version of search. Both AI systems and today’s readers expect the answer first. Background information, nuance, and caveats all belong after the core response, not in front of it.

The fix is simple: Restructure your content so the direct answer appears in the first one to two sentences after the heading.

3. Every AI Platform Has Its Own Citation Logic

Optimizing for Google AI Mode is a different task from optimizing for Perplexity. What earns you citations on ChatGPT won’t automatically translate to Claude. Each AI search platform has its own logic for how it picks and presents sources.

The fix is simple: Build a platform-specific content approach. Start by identifying which two or three AI platforms drive the most traffic to your site, then study what those platforms consistently cite.

4. Ignoring Third-Party Platforms

Focusing only on your own website means leaving a lot of citation potential untapped. Some platforms, Perplexity being the most obvious case, actively prefer community-driven content. If a relevant Reddit thread or LinkedIn post exists, they’ll often surface that before your brand’s official page.

You need a presence wherever your audience is already looking for answers, and those answers need to be good enough to reference.

The fix is simple: Identify the two or three off-site platforms most relevant to your niche and build a consistent presence there. For most eCommerce brands, that means Reddit and LinkedIn at minimum.

5. Unmonitored Mentions Damage Visibility

AI visibility works in both directions. If the general sentiment about your brand online leans negative, AI tools will reflect that, usually without any warning to you. Keeping an eye on your own site isn’t enough.

The fix is simple: You need a broader view of how AI systems are characterizing your brand across different sources. Run your brand name through the major AI platforms monthly and pay attention to what sources they’re pulling from. When a negative or outdated source keeps surfacing, go directly to that page, create a cleaner, more structured alternative.

Quick Recap

LLM citations are fast becoming one of the most critical visibility metrics for eCommerce brands that depend on search traffic. The encouraging part is that the signals driving them, including freshness, authority, clean formatting, and original extractable content, are well within your control.

Brands that act on this now are positioning themselves ahead of the curve. Most competitors haven’t made a move yet, and that window is not going to stay open indefinitely.

Begin with the Week 1 audit to understand where you currently stand. If you’d rather skip the manual work and monitor your AI visibility across platforms from a single dashboard, Track My Visibility is designed for exactly that. It tracks how frequently your brand gets cited across AI tools like ChatGPT, Perplexity, and Google’s AI Overviews, so you always know where you stand.

Regardless of where you begin, just start. Then build from there.

FAQs:

What does an LLM citation actually mean?

A citation happens when an AI assistant draws information from a source and acknowledges it in the response, either through a link or a named reference. It’s essentially the model saying, “Here’s where this came from.” The distinction between a citation and a plain mention is practically significant. A citation drives traffic back to your source. A mention borrows your content without giving you anything in return. Extracted citations are the actual goal worth optimizing for.

What does it take to get your content cited by AI?

Create content that AI systems can easily find, interpret, and extract from. That means keeping your content fresh with visible publish dates, establishing clear author credentials, and structuring answers as standalone and exact sentences that don’t rely on surrounding context to make sense. Original research and proprietary data carry extra weight here. Models tend to favor primary sources they can reference directly over pages that simply recap what someone else already said.

How many types of AI citations are there?

Citations in AI search broadly fall into four categories. The first is direct linked citations, where the source is named and hyperlinked. The second is attributed text citations, where the source is named but no link is provided. The third is paraphrased mentions, where your content clearly shaped the answer but received no credit.

The fourth is parenthetical citations, which are brief inline references embedded within a response. Linked, attributed citations are the ones worth pursuing. The remaining types bring little to no meaningful traffic.

Which AI platform is most likely to cite your content?

Perplexity leads when it comes to citing sources. It surfaces references consistently and prominently, making it a solid starting point if you want to track how your content shows up in AI-generated answers. Google’s AI Overviews work well for pages already performing in organic search. ChatGPT and Claude are more selective, generally pulling from high-authority and well-established sources.

What happens behind the scenes when an AI cites a source?

Most AI citations rely on retrieval-augmented generation (RAG). Rather than depending solely on training data, the model pulls from the live web, finds relevant cited pages, and builds its final answer around that content before attributing the source. This is where publishers have real leverage. RAG responds to signals you can control: content freshness, domain authority, and how easily your text can be extracted and parsed.

What makes ChatGPT more likely to cite your page?

ChatGPT leans heavily on authority signals. It favors established publications and trusted domains. To improve your odds, work on building domain authority, earning mentions from credible outlets, and keeping your content well-structured.

For ChatGPT visibility specifically, lead with the answer, keep paragraphs short, and don’t bury the key point. An exact snippet placed right after a question heading is the format most likely to get picked up.

Are AI models reliable when it comes to citing sources?

Yes. LLMs were built to generate useful responses, not to maintain a reference list. Citations happen most reliably when a RAG system retrieves specific content and ties it to the answer. Without that retrieval layer, models can state things confidently with no sourced backing.

Platforms like Perplexity are cited consistently because they have strong retrieval systems built in. For publishers, the lesson is straightforward: the more direct and self-contained your answers are, the real value you offer to a retrieval system looking for something it can actually use.

Resources:

Post a Comment

Got a question? Have a feedback? Please feel free to leave your ideas, opinions, and questions in the comments section of our post! ❤️